Govern AI-driven engineering before it turns into risk.

AI agents generate code.

Your organization still owns the consequences.

Asplenz Knowledge gives your agents a structured decision layer - so they don't guess.

AI velocity changed. Governance didn't.

AI-assisted development is becoming default in many teams.

Agents now:

- introduce dependencies

- modify APIs

- refactor architecture

- deploy infrastructure

But they don't know:

- your architectural decisions

- your non-negotiable security invariants

- which exceptions were approved

- what must never change

Without a declared decision layer, agents guess.

Asplenz Knowledge

Operational Decision Governance for AI Systems

Knowledge is a declarative system of record for:

Agents query this normative state before acting.

Knowledge does not block execution; it exposes the applicable decision state. Your ecosystem decides.

MCP-native (Model Context Protocol compatible). Built for modern AI coding environments and CI pipelines.

Agents call Knowledge on demand - not injected into every prompt. No token overhead. No bloated system prompts. Six tools, called only when the agent needs context.

Explore KnowledgeFrom declaration to enforcement

Declaring rules is not enough.

Knowledge Verifier (Premium Add-on)

Knowledge Verifier analyzes every Pull Request against your declared normative state.

It:

- Resolves applicable scope

- Detects invariant and rule violations

- Validates override usage

- Enforces Implementation Report citations

- Produces a CI-ready verdict (pass | warn | fail)

- Generates a human-readable Compliance Report

You choose the mode:

- Report-only

- Fail on blocking violations

- Strict (citation enforced)

What operational governance looks like

Instead of:

"We think this change is fine."

You get:

Impact: 2 dependent rules, 1 active override on this scope. No tensions detected.

Every merge becomes explicitly evaluated against declared constraints.

No tribal knowledge. No invisible assumptions.

Who this is for

- Engineering teams using AI coding tools

- Organizations introducing autonomous agents

- Platform & security teams defining invariants

- CTOs who want velocity without architectural drift

Different by design

Knowledge is not:

- A code quality scanner like SonarQube

- A vulnerability scanner

- A generic policy engine like Open Policy Agent

- A prompt

Those tools evaluate code or execute rules. Knowledge structures the decisions themselves.

It governs:

- Why a rule exists

- Who approved an exception

- What replaced what

- Which constraints are non-negotiable

It operates at the normative layer.

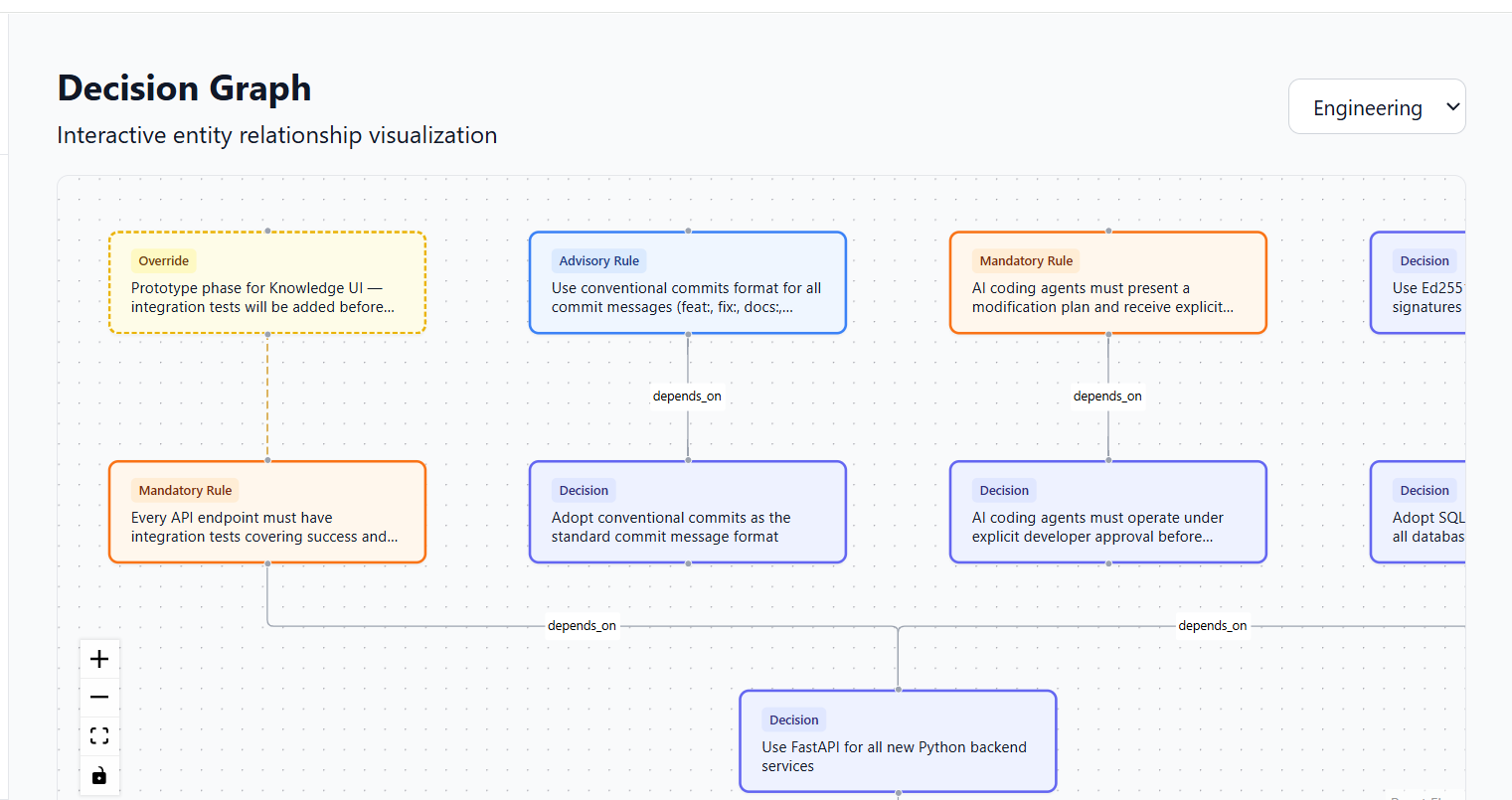

Knowledge doesn't store rules as a flat list. It structures them as a navigable graph. Every rule links to the decision that justifies it. Every override links to the rule it exempts. Every change surfaces what it affects. You can trace why a constraint exists, what would break if you changed it, and where your normative state contradicts itself.

No policy engine does this. They execute rules. Knowledge connects them.

A note on Evidence

Asplenz also builds Evidence - an independent product for sealing high-stakes decisions and generating cryptographically verifiable proof artifacts.

Evidence addresses a different layer of governance: immutable proof for critical commitments.

Start free with the AI Registry. Inventory your AI systems, classify them under the EU AI Act, and see where your proof gaps are - at no cost.

Human-readable. System-executable.

Engineers can:

- Retrieve past decisions and rationale

- See when and why a rule changed

- Understand approved exceptions

CI pipelines can:

- Evaluate PRs against invariants

- Validate overrides

- Produce structured verdicts

Agents can:

- Query applicable rules before acting

One normative state. Used by humans and systems.

Start governing decisions at AI speed

Agents act.

Organizations remain accountable.

Asplenz Knowledge makes that sustainable.